Our paper on focused on an Explanation Evalution Framework has just been published in the Information Systems Frontiers journal by Springer Nature [link]

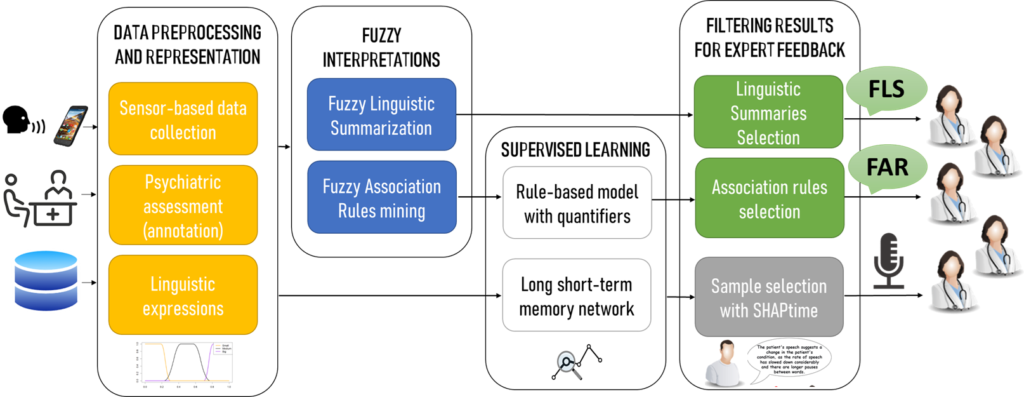

Bipolar affective disorder and depression are among the most prevalent mental health conditions, with recent advances highlighting the role of sensors and computational methods in monitoring them. However, current Artificial Intelligence (AI)-based systems, while accurate, often lack transparency, limiting their trustworthiness and clinical adoption. Furthermore, the state-of-the-art is still missing clear guidelines on how to design advanced human-centric validation approaches for interpretations or explanations of intelligent systems with the aim of paving the way towards trustworthy AI systems ready to be adopted by clinicians. This paper presents a novel evaluation approach integrating supervised learning with fuzzy information granules derived from fuzzy association rules and linguistic summaries to enhance interpretability. Its main innovation lies in the human-centric evaluation methodology. Our use case study in the mental health monitoring setting demonstrates the framework’s ability to reveal meaningful relationships between sensor data and mental states. Thus, this work contributes to the development of trustworthy AI systems in compliance with emerging regulatory standards.

Our findings confirm that fuzzy logic-based interpretations constructed about the patients’ acoustic features would be beneficial for both clinicians and patients. 75% of respondents agreed that interpretations addressed important aspects of the clinical problem, and 91.7% of respondents agreed that additional interpretations would help psychiatrists in daily patient care. However, evaluations were more critical concerning the clarity and evidential support. Further work should focus on improving the conciseness and clarity of the automatically constructed fuzzy information granules.